In the 1960s, IBM embarked on what Fortune Magazine called the $5 Billion Gamble. It was a bet-the-company investment on a scale nobody had ever seen before. The payoff was the legendary System/360 mainframes, which revolutionized computing and set the stage for two decades of IBM dominance.

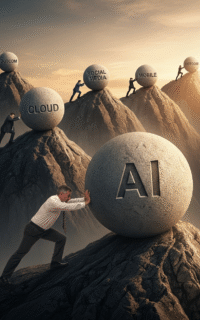

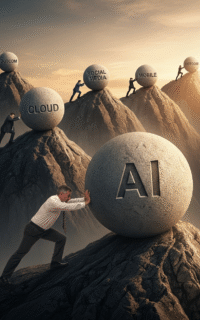

That $5 billion would be roughly the equivalent of $50 billion today, but even that princely sum is dwarfed by the $364 billion that tech giants are expected to invest in artificial intelligence this year. And the spending won’t stop there. McKinsey projects that building AI data centers alone could demand $5.2 trillion by 2030.

Today, the AI investment boom is probably the single biggest factor propping the US economy. However, there is cause for concern. Throughout our history, great technological advances have led to overinvestment, crowding out of traditional industries and, eventually, a collapse triggering economic upheaval. Indicators suggest that’s where we’re headed now.

read more…

We’ve all heard the saying, “when you change the incentives you change the behavior,” and most of us even believed it at some point. But with experience, you find that human behavior doesn’t fit into such neat little boxes. People act the way they do for all kinds of reasons, some of them rational, some of them not.

The truth is that incentives often backfire because of something called Goodhart’s law. Once we target something to incentivize, it ceases to be a good target. A classic example occurred when the British offered bounties for dead cobras in India. Instead of hunting cobras, people started breeding them which, needless to say, didn’t solve the problem.

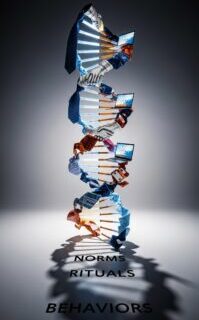

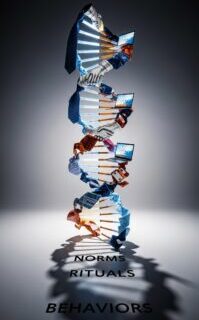

Smart leaders understand that behavior is downstream of culture. There are norms that underlie behaviors that are encoded by rituals which guide everything from how you hire, how you promote and how you determine compensation. That’s why you can’t just tweak incentives, you need to activate cultural triggers that shift norms from the inside out.

read more…

Every change starts with a grievance. There’s things people don’t like and they want them to be different. At any given time in any organization, there are things that aren’t working as well as they should. Employee turnover is too high, sales are down and customers are complaining. For whatever reason, things need to change.

Smart leaders know that they can’t just stay mired in grievance. If you’re only focusing on problems, you’ll get caught up in an endless to-do list. It is no longer enough to simply plan and direct action, we must inspire and empower belief and that means creating an aspirational vision that can form the basis of a shared purpose.

Yet you can’t just jump to the vision all at once. In the beginning, the idea is flawed and unproven, the organization isn’t ready for it and it is bound to incur visceral resistance in some quarters. Early on, ideas need to be protected and nurtured until they begin to gain some traction. As I explained in Cascades, the best way to do that is with a Keystone Change.

Everywhere I go in the world to speak or advise organizations, I hear the same complaint: “No one listens to my ideas.” I hear it from young professionals trying to launch their careers, mid-career managers navigating internal politics, and even senior leaders struggling to steer their organizations in a new direction.

We like to believe that good ideas rise to the top, that if something is smart or right, people will naturally get behind it. But history shows that’s not true. From antiseptics and cancer immunotherapy to Chester Carlson’s Xerox machine, even the most breakthrough ideas faced fierce resistance. Countless others never even saw the light of day.

As computing pioneer Howard Aiken put it, “Don’t worry about people stealing your ideas. If your ideas are any good, you’ll have to ram them down people’s throats.” Getting traction has less to do with persuasion or even the importance of the idea itself than it has to do with power. If you want your ideas to have an impact, you need to learn how to build influence.

read more…

The legendary physicist Max Planck once said, “A new scientific truth does not triumph by convincing its opponents and making them see the light, but rather because its opponents eventually die, and a new generation grows up that is familiar with it.” That may be a bit extreme, but the point still stands.

The status quo always has inertia on its side and never yields its power gracefully. To bring genuine change about, you not only have to point the way to a new and better reality, you also need to displace what people already know and are comfortable with. To do that, you’ll need to overcome resistance, both rational and irrational.

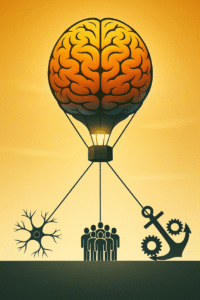

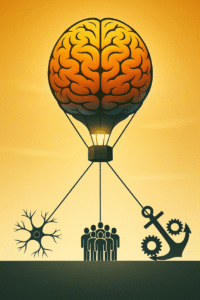

More specifically, you’ll need to overcome three forces that uphold the status quo: pathways that shape our default thinking, the cultural norms that perpetuate familiar behaviors, and the economic structures that resist disruption. To create real transformation, leaders must help people unlearn entrenched assumptions and rewire the forces that keep them stuck.

read more…

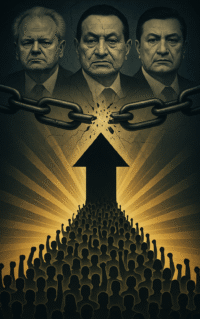

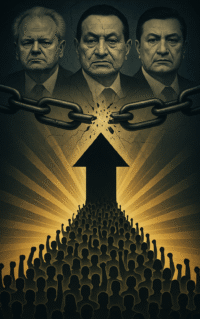

Gene Sharp lived most of his life as a relatively obscure academic. He wrote scholarly books that few read and gave lectures that few attended. His idea, that nonviolence could prevail over the most brutal dictators, seemed quixotic at best. While he gained a small cult following, he remained mostly unknown, even in scholarly foreign policy circles.

But then a group of young activists used his ideas to overthrow the Serbian strongman Slobodan Milošević. That led to an award-winning documentary narrated by Martin Sheen, which inspired activists in Georgia and then Ukraine to reach out. The Serbians trained them in Sharp’s methods, which led to the Rose Revolution and Orange Revolution, respectively.

Today, a quarter century later, Sharp’s methods have been successfully applied in more than 50 countries. I saw their power firsthand in Ukraine, and that experience inspired me to write Cascades, which showed how these same principles can be leveraged for organizational transformation. You can learn them too and put them into practice in your own life and work.

read more…

When Everett Rogers introduced the S-shaped diffusion curve in the first edition of his book, Diffusion of Innovations, he was directly following the data. Researchers like Elihu Katz had already begun studying how change spreads and noticed a consistent pattern in the adoption of hybrid corn and the antibiotic tetracycline.

Yet it was Rogers who shaped our understanding of how ideas spread. Publishing more than 30 books and 500 articles, he studied everything from technology adoption to family planning in remote societies and just about everything in between. In doing so, he laid the foundation for an evidence-based approach to change.

Still, while Rogers showed us how change works, he didn’t offer much insight into why it works that way. This is where Michael Morris’s book Tribal can be helpful. By exploring how our tribal instincts lead us to adopt—or resist—change, we can learn to work with human nature rather than against it. Smart leaders don’t try to override instinct. They harness it.

read more…

When Peter Drucker first met IBM CEO Thomas J. Watson in the 1930s, the legendary management thinker was somewhat baffled. “He began talking about something called data processing,” Drucker recalled, “and it made absolutely no sense to me. I took it back and told my editor, and he said that Watson was a nut, and threw the interview away.”

Things that change the world always arrive out of context for the simple reason that the world hasn’t changed yet. So we always struggle to see how things will look in the future. Visionaries vie for our attention, arguing for their theory of how things will fit together and impact our lives. Billions of dollars are bet on those competing claims.

This is especially true today, with AI making head-spinning advances. But we also need to ask: What if the future looked exactly like the past? Clearly, there’s been big innovations since Drucker met Watson. How did those technologies impact the economy and shape our lives? If we want to know what to expect from the future, that’s where we should start.

read more…

In March 2024, Bill Anderson, pharma giant Bayer’s CEO, wrote an op-ed in Fortune vowing to bust bureaucracy, slash red tape, and eliminate layers of middle management to create a more agile and innovative enterprise. “Our radical reinvention will liberate our people while cutting 2 billion euros in annual costs by 2026,” he wrote.

I wrote soon after that what Anderson was doing wasn’t genuine transformation but had all the telltale signs of transformation theater: a false sense of urgency calling for drastic action, a rushed strategic process, with little or no time for analysis or dissent, and a large, premature public rollout to generate publicity for the effort.

Today, more than a year later, Bayer’s stock remains near all-time lows. Investors are increasingly frustrated and it’s not hard to see why. He went into an organization that was already reeling and introduced even more stress and disruption, with predictable results. If you want to create genuine transformation, you need to start by creating a sense of safety.

read more…

Years ago, we had a manager named Ania running one of our publishing operations. She was well-liked, diligent, and responsible. Still, we felt the business needed a more creative spark, so we brought in a rising executive to take her place. Ania transitioned out gracefully and left the company on good terms.

Things turned out well. Our business thrived and Ania became a sought-after interior decorator, renowned for her creativity. The problem wasn’t that she lacked any creative ability. The problem was that we weren’t giving her the type of challenges that excited her. While she languished in our organization, she excelled in a different environment.

The truth is that there is no such thing as a “creative personality.” Leaders set the conditions for the people to be creative. But as you set those conditions, you also narrow possibilities, making the environment fertile ground for some, but barren for others. Every leader needs to build the discipline to make those choices. Culture matters. You need to shape it with care.

read more…