Every good salesperson knows the 7-step process in which you identify and qualify a prospect to understand their needs, then present your offer, overcome objections, close the sale and follow up. It’s proven so consistently effective that its concepts have been the standard for training salespeople for decades.

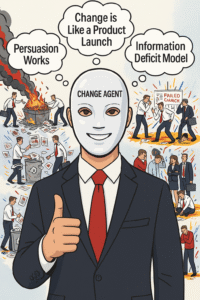

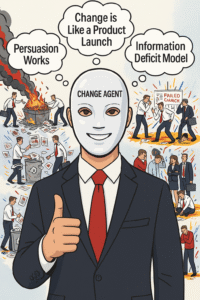

Many business leaders come up through sales and marketing, so it shouldn’t be surprising that they try to use similar persuasion techniques for large-scale change.They work to understand the needs of their target market, craft a powerful message, overcome any objections and then follow-through on execution.

Unfortunately, that’s a terrible strategy. The truth is that the urge to persuade is often a red flag. It means you either have the wrong people or the wrong idea. Effective change strategy focuses on collective dynamics. Rather than trying to shape opinions, you’re much better off empowering early enthusiasts and then working to shape networks.

read more…

Change is often presented as an enigma. Unlike a traditional management task, you can’t just devise a plan and execute it. To be an effective change leader, you need to embrace a certain amount of uncertainty because change, by definition, involves doing new things and that always involves some measure of unpredictability.

Still, that doesn’t mean change is mysterious. We actually know a lot about it. In Diffusion of Innovations, researcher Everett Rogers compiled hundreds of studies performed over many decades. Around the same time, Gene Sharp led a parallel effort to understand how large-scale political movements drive social and institutional change.

So while any change effort involves no small amount of uncertainty, there is also quite a bit of consistency. Much as Tolstoy remarked about families, successful transformations end up looking very much alike, while unsuccessful transformations end up failing in their own way. Here are four numbers to keep in mind as you embark on your change journey.

read more…

Every year since 2010, I’ve posted an article about what trend I expect to dominate the next twelve months. Throughout the 2010s, these forecasts usually focused on emerging technologies or new currents in management thinking. But around 2020, that began to shift. The annual trends increasingly centered on how we cope with change rather than the change itself.

Last year, it was “The Coming Realignment.” History tends to propagate at a certain rhythm and then converges and cascades around certain points. Years like 1776, 1789, 1848, 1920, 1948, 1968, 1989—and, it seems, 2020—mark these inflection points. The years that follow are then spent absorbing the shock and navigating the fallout.

Today, everything is up for question. Will AI boom or bust? Will it take our jobs or bring new prosperity? What kind of economic system will we adopt for the future? We are in the midst of a great realignment. We know from previous inflections that what comes after will be profoundly different from before and what we most need to watch now is our institutions.

read more…

When Benoit Mandelbrot first started out as a young researcher at IBM, one of the first problems he was asked to tackle was noise in communication lines. What he noticed was a strange pattern: There would be long periods of continuity, punctuated by periods of discontinuity that persisted until a dominant pattern could establish itself again.

Mandelbrot called these forces of continuity and discontinuity Noah Effects and Joseph Effects. “Joseph effects,” after the biblical story about seven good years and seven bad years, was continuous and predictable. The second, which he termed “Noah effects,” was like the famous storm that wiped everything clean.

Clearly, we’re in a period of discontinuity and things will continue to be chaotic until a new set of paradigms care establish themselves. When I look back on my writing over the past year it’s clear that two things were on my mind: How to adapt to this period of realignment and how best to create new paradigms. Let me know what you think in the comments.

read more…

One of the great things about books is that they can take you out of your current context. You can go to a different period of history, explore another industry or even a different planet. That’s one reason that I like doing these annual reading lists. They give me a record about what worlds I’ve chosen to enter in past years.

Looking back over the past year it’s clear that how things work—or don’t—was very much on my mind. We can have great ideas, put together plans and strategies, but if you can’t execute on the ground, everything is bound to go off the rails. That’s probably why so many of the books I read this year are about how to make stuff work better.

Reading books is, quite simply, how I work through the ideas I’m grappling with and, even if I can’t always find answers, I can usually learn enough to start asking better questions. The books I’ve read over the past year have certainly helped me do that, and I hope they can do the same for you. Also, please let me know about the books you’ve read in the comments.

read more…

There’s an old myth that Inuit cultures have as many as a hundred words for snow. I remember learning about it in school, and there was just something wonderful about the idea that the sensory world can be so deeply rich and different. I guess that’s why, although it has been debunked many times, the Inuit snow myth keeps getting repeated.

There is also a lot of truth to the underlying concept. As anybody who has ever learned another language or lived in a another culture knows, people’s perceptions vary widely. In The WEIRDest People In The World, Harvard’s Joseph Henrich documents how important and interesting these differences can be.

So if the Inuit snow myth highlights an important concept, many would argue that there’s no real harm in repeating it, in much the same way we continue to tell the apocryphal story of George Washington cutting down his father’s cherry tree. Yet truth matters. Once we start degrading it we lose our ability to understand what is often a messy and nuanced world.

read more…

“It ain’t what you don’t know that gets you into trouble. It’s what you know for sure that just ain’t so,” is a quote, often attributed to Mark Twain, endures because it so perfectly captures our everyday experience. You can learn things that you don’t know, but it’s incredibly difficult to unlearn something you believe in your heart to be true.

There’s real science behind this. Things we experience are packed away in our brain as connections called synapses, which form and evolve over time. These connections strengthen as we use them and degrade when we do not. Or, as neuroscientists who study these things like to put it, the neurons that fire together, wire together.

That’s why leaders pursuing change often default to a managers mindset instead of a changemaker mindset, because that’s what they know and what they’ve been successful with. Yet just like in that quote above, manager assumptions can undermine a transformational initiative. Here are three beliefs that sabotage change and what you can replace them with.

One of the most striking aspects of Sarah Wynn-Williams’ bestselling memoir Careless People, about her years at Meta, is the way she portrays Sheryl Sandberg. Contrary to Sandberg’s carefully crafted public image as a level-headed advocate for working women and their families, she is shown to be narcissistic, mercurial and hypocritical.

Whether you see Wynn-Williams’ book as an important exposé of big tech culture or a hit job by a disgruntled former employee, it’s hard to escape the sense that Sandberg’s public persona was more fantasy than reality. The image of a fabulously wealthy executive and doting mother living her best life every hour of the day was always a bit over the top.

There is clearly something unhealthy about the idealized images that we are constantly inundated with, as well as those equally curated versions that so many feel compelled to post on social media. Beyond the obvious psychological toll, the pressure to project constant perfection undermines the gritty, unglamorous work required to perform at a high level.

read more…

Take a moment to think about what the world must have looked like to J.P. Morgan a century ago, before his death in 1913. A shrewd investor in emerging technologies like railroads, automobiles, and electricity, he was also an early adopter, installing one of the first electric generators in his house. Today, we might call him a Techno-Optimist.

He could scarcely imagine the dark days ahead: two world wars, the Great Depression, genocides, the rise of fascism and communism, and a decades-long Cold War. Had he lived to see it, he might have asked how, despite so many scientific and technological breakthroughs, things went so terribly wrong.

Today, we are at a similar juncture, and there are worrying parallels to the 1920s, including paradigm-shifting technologies, a revolt against immigration, globalism, income inequality and even a global pandemic. Now, like then, the choices we make will shape our future for decades to come. We need those who create the future to be rooted in the world we live in.

read more…

In 1998, five kids met in a cafe in Belgrade. Still in their 20s, they were, to all outward appearances, nothing special. They weren’t rich, or powerful, they didn’t hold important positions or have access to significant resources. Nevertheless, that day they conceived a plan to overthrow their country’s brutal Milošević regime.

The next day six friends joined them and they became the 11 founders of the activist group Otpor. A year later, Otpor numbered a few hundred members and it seemed that Milošević would be dictator for life. A year after that Otpor had grown to 70,000 and the Bulldozer Revolution brought down the once-unshakable dictator.

That’s how change works, in phases. Every transformational idea starts out weak, flawed and untested. It needs a quiet period to work out the kinks. Through trial and error, you see what works, begin to gain traction and eventually have the opportunity to create lasting change. If you’re serious about change, you need to learn the phases of change and manage them wisely.

read more…